Coding languages are a set of rules that people – i.e., developers – use to tell computers how to execute instructions to complete a task. It’s the penultimate two-way communication between programmers and computers, just like human language is the intermediary between two or more people as well.

Truthfully, the number of these languages grows each year exponentially. Improvements in processing speed and other technologies make it possible for almost everyone with internet access (and some coding knowledge) to contribute and expand upon the syntax of already-existing languages, or create new ones on a whim – kind of.

To that end, programming, coding, or software development has become a lucrative career option for many people who previously didn’t have access to these resources.

There are around 700 programming languages today. In terms of popularity, Stack Overflow’s 2022 survey shows that JavaScript is the most popular scripting language, while Python and PHP received 48% and 21% votes respectively from professional developers working in multiple IT fields. So, deciding which language (or multiple) to learn can be a daunting task.

Thankfully, we’ve compiled a list of the most popular backend languages, along with their respective frameworks, libraries, and runtime environments, all in one place.

Let’s get into it.

Key takeaways

Backend definition: Backend development focuses on the "data access layer"—the server-side logic that users don't see.

Top beginner languages: JavaScript (Node.js), Python, and Go are highly recommended for beginners due to their readability and large communities.

Database Management: Understanding the difference between Relational (SQL) and Document-oriented (NoSQL/MongoDB) databases is crucial for data architecture.

Cloud & DevOps: Modern backend engineering involves managing infrastructure through cloud providers such as AWS, Azure, and GCP, often using automation tools like Docker and Kubernetes.

Frameworks: Frameworks such as Laravel (PHP), Spring Boot (Java), and Express (JS) provide pre-built tools to speed up development.

In the coding universe, ‘backend’ refers to the part of a program, website, or application that users do not see. In programming terms, the ‘backend’ is usually known as the data access layer, while the ‘frontend’ is called the presentation layer.

For example, most websites today are dynamic. This means content is generated as you go. A dynamic page usually contains one or multiple scripts that run on a web server each time you access a page on that website. The scripts generate all of the content on that page, which is then sent to and displayed by the user’s browser.

Every process that takes place before the page is displayed in a web browser is considered part of the ‘backend’.

Some languages are mostly suited for backend development, others for frontend development, and some can be used to develop software and apps in both paradigms.

Coders who are proficient in a combination of both or multiple of these languages are called fullstack developers. The process itself is known as fullstack development.

However, what is the most popular programming language today?

Let’s examine some of the top picks.

JavaScript

JavaScript is a scripting, text-based programming language that enables users to create interactive web pages, embedded hardware controls, mobile apps, and other software. It runs on both the server-side and the client-side.

In fact, you may be familiar with server-side and client-side scripts as backend and frontend, respectively. Server-side equates to backend, and when we say client-side, we mean frontend.

Further, the server-side is also where the source code is stored, while the client-side usually denotes the user’s web browser, where you can run either some or all of the code.

On the backend side of the development process, devs can choose from among some of the most popular JavaScript frameworks and libraries to build an app.

A small subset of these frameworks includes:

Node.js

Nest.js

Express

Koa

Apart from interactive web pages, website elements and other apps, devs can also use JavaScript to develop any given compatible backend infrastructure and build web servers utilizing Node.js.

Lastly, JavaScript can be used to build games as well. Creating the next browser-based Wordle might be the way to go for new and upcoming developers looking to hone their JavaScript coding skills.

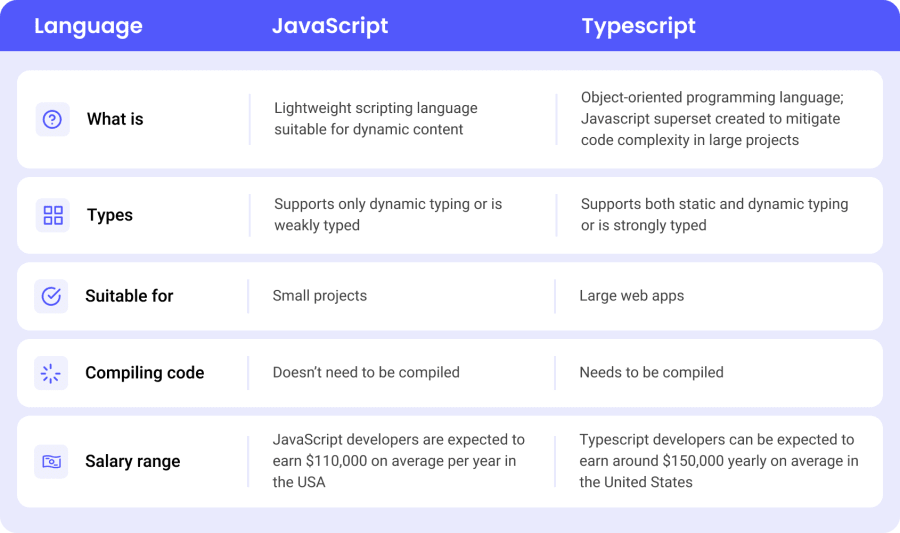

JavaScript VS TypeScript

TypeScript is a syntactical superset of JavaScript, dubbing it the modern-day JavaScript language. In other words, TypeScript is JavaScript with additional features. It’s considered both a language and a set of tools, and it’s currently being developed and maintained by Microsoft.

The main differences between JavaScript and TypeScript are as follows:

Given all of the above, which one should you choose? The answer is: it depends.

Feature | JavaScript | TypeScript |

|---|---|---|

Project scale | Small tasks / Quick prototypes | Large, complex enterprise projects |

Development speed | Faster initial setup | Slower setup, but fewer long-term bugs |

Team environment | Solo or small teams | Large teams with multiple collaborators |

Speaking of JavaScript/TypeScript frameworks for backend development, here’s a list of some of the most popular ones:

Node.js

Node.js is an open-source, JavaScript runtime environment that runs on the Chrome V8 JavaScript engine.

To understand Node.js (including all upcoming frameworks), you have to understand the difference between:

Runtime Environment

Framework

Library

A runtime environment (RTE) is the environment in which a program or app is executed. It’s the combination of software and hardware that makes the code run.

A framework is a set of pre-made solutions, tools, and processes that solve a specific problem.

What about libraries? In programming, a library is a collection of pre-written code that developers can use to build applications. It’s like using existing audio clips to create a song, rather than recording your own audio and potentially ending up with a worse song.

Currently, there’s an ongoing debate over whether Node.js is a runtime environment or a framework, and at this point I’m too scared to side with either group. For simplicity, it can be referred to as both.

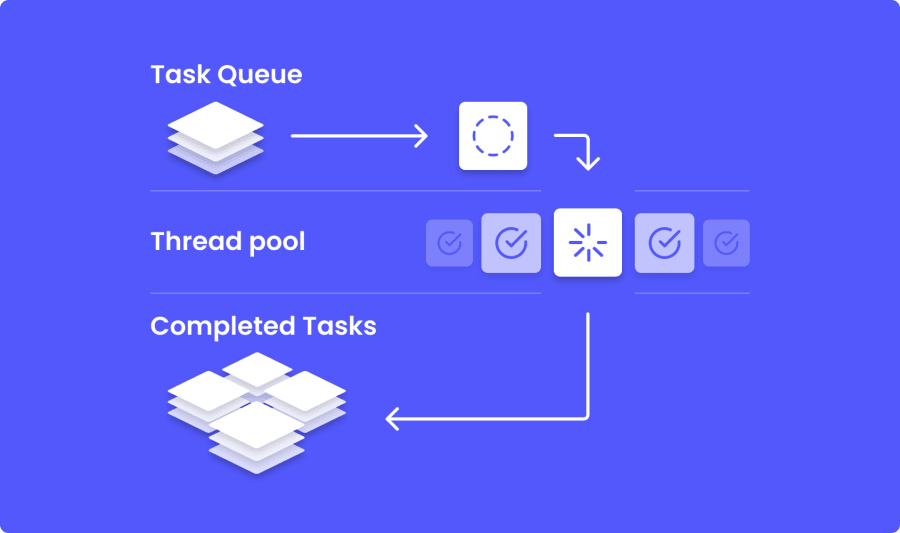

Here’s a diagram that might help you understand this better:

In most cases, this diagram will be factual but its usefulness is strongly correlated to the context of your specific problem. Thread carefully.

And as far as threading is concerned, Node.js supports asynchronous I/O on a single thread, but it’s more complicated than that. In reality, Node.js is not purely JavaScript. In fact, some parts of the code are done in C++, which allows Node.js to optimize for multi-threading for internal tasks like DNS lookup, network calls and file system tasks – to name a few.

All of the processing as explained above is done asynchronously (in Node.js), meaning that events in the code are executed independently of the main program flow. In synchronous programming, one event has to end in order for another event to begin.

But let’s back up a bit and tackle the basics.

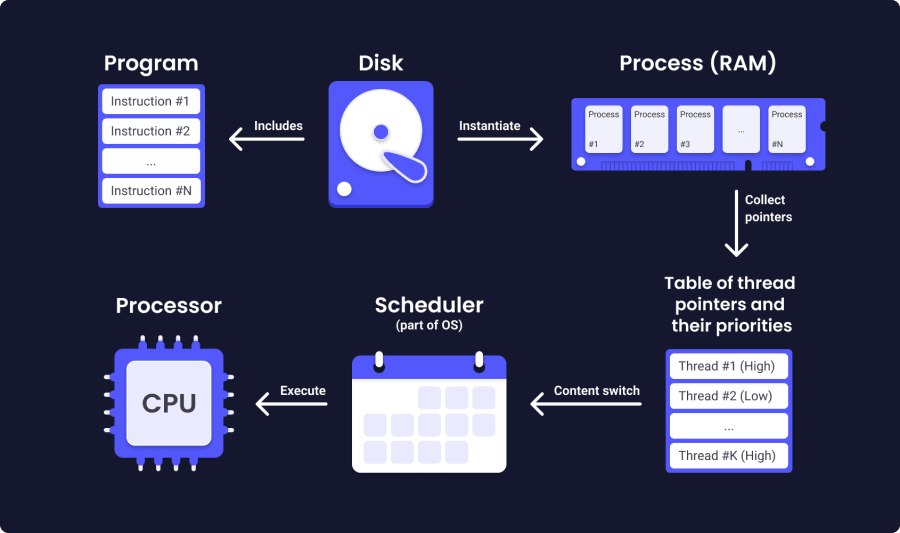

A process is a program in execution. It starts when a program undergoes execution. One process can have multiple threads.

A thread is the smallest sequence of programmed instructions that can be managed independently by a scheduler, which is usually part of the OS.

The main difference between the two is that threads run in the same process on a shared memory space, while processes run in different memory locations.

Multiprocessing is the use of a single computer system with two or more processors (CPUs) to perform calculations and complete tasks. If a computer system has multiple processors, this means more processes can be executed simultaneously.

Multi-threading refers to an execution model that allows multiple threads (code blocks) to run concurrently within a single process.

Lastly, a thread-pool represents a group of idle threads which are ready to be given tasks in order to avoid latency and optimize performance and execution.

In this type of model, multiple threads are constantly being ‘created’ and ‘destroyed’ due to the nature of their short-lived tasks—hence increasing efficiency in computer resource allocation.

So:

JavaScript runs synchronous operations on a single thread

Node.js runs asynchronous operations on a single thread (excluding some internal tasks and Node.js libraries, which can be run in a multi-threaded way)

With that out of the way, let’s take a look at some popular and widely used Node.js frameworks.

Nest.js

Straight from the official Github page, Nest.js is a progressive Node.js framework for building efficient, scalable and reliable server-side applications. Nest.js is built with TypeScript and uses modern JavaScript, thus preserving compatibility with pure JavaScript.

Nest.js combines elements of object-oriented programming (OOP), functional programming (FP), and functional reactive programming (FRP), whilst also using Express, Fastify, and a ton of other libraries and third-party plugins.

Express.js

Express.js is a flexible Node.js web framework that offers a strong set of features to develop apps for both web and mobile to boot. As it stands, it’s considered to be the most popular Node.js framework.

Popular Express.js features:

Dynamically renders HTML pages by passing arguments to templates

Allows the creation of handlers for requests featuring different HTTP verbs at different routes (URL paths)

Allows setting up middleware to respond to HTTP requests

You can find the full list of Express.js middleware modules on the official Express.js website.

Note: middleware is an intermediary software that runs between applications and ensures everything communicates properly across two or multiple environments.

Koa.js

Koa.js is an open-source Node.js web framework developed by the team behind Express.js.

As per their official website, Koa.js is described as a smaller and more expressive foundation for web apps and APIs. It uses async functions to avoid callbacks and improve error handling, but it doesn’t support middleware modules in its core.

To offset this gap, Koa.js includes a set of methods for writing servers in an easy and efficient manner.

Other notable Koa.js features:

Supports the use of both sync and async functions

Allows the use of ES6 generators that significantly tidy up an otherwise more complex asynchronous Node.js code

Extremely lightweight at only 550 lines of code

Additional JavaScript frameworks

Some JavaScript frameworks are the unsung heroes of commit repositories worldwide. Meaning, they help immensely with producing viable solutions to otherwise difficult coding challenges, but don’t get the recognition they deserve.

Some of these include:

Meteor.js

Meteor.js is a comprehensive, fullstack JavaScript framework utilizing a so-called DDP protocol to sync client-server data flow. In addition, Meteor.js features a ton of tools, solutions, and code templates to make the lives of web developers that much easier.

Strapi

Strapi is an open-source, headless, 100% JavaScript-based CMS (content management system) primarily for web content creation, editing, and management. Excellent for creating your preferred web application without in-depth knowledge of JavaScript or programming theory. Strapi also offers the option to deploy both on traditional servers and cloud hosting services.

Fastify

Fastify is a low-overhead, open-source Node.js web framework focused on delivering a great developer experience through its reliability, plugin support, and speed. Currently capable of serving up to 30,000 requests per second, making it one of the fastest web frameworks in the ever-growing JavaScript/Node.js ecosystem to date.

C++

C++ is a text-based, object-oriented, multi-purpose programming language, often dubbed the ‘Swiss pocket knife of coding languages.’ Its usage extends far and wide across multiple domains and ecosystems, including the underlying architecture behind Bloomberg, Facebook (Meta), Amazon, and plenty more.

The five basic concepts of C++ are as follows:

Variables: a way of storing, keeping, and retrieving values or data for continuous use. Once declared, variables can be used multiple times within the scope of their initial declaration. They are considered the foundation of any computer programming language.

Syntax: a set of words, symbols, rules, and expressions that define the correct execution of a statement in any given programming language. You must follow these predefined rules in your code; you’ll get errors in your output.

Data structures: a system of declaring and retrieving variables that optimizes the coding process. For example, you can store multiple variables in one or more data structures to make the code shorter and easier to read, thereby improving code execution and delivering a better overall experience for the end user. Arrays are a popular way of working with data structures.

Control structures: the way in which the code is compiled and executed. In C++, the compiler reads the code from top to bottom (one line at a time), and the program can jump to any other part in that same code based on what the code itself is trying to do. To that end, the program can repeat the same code, jump parts of or the entire code, or, if you’re unlucky, get stuck in an infinite loop.

Tools: colloquially, tools are software bundles that make your life easier. There are thousands of different tools across multiple programming languages in all environments. The most important one (by unspoken consensus) is the IDE — Integrated Development Environment. IDE ensures that all your folders, files, and other documentation are neatly organized and provides a clean way to access them.

One of the most popular (and widely known) C++ frameworks is Unreal 4, which is explained in detail below.

Unreal 4

Unreal is a game engine used to create video games. It’s mainly written in C++, but it also features the added functionality of a visual scripting method called Blueprints.

In essence, Blueprints allows the game creator to drag-and-drop functionalities and game mechanics instead of coding them from scratch. Each method (hard-coding in C++ VS visual scripting with Blueprints) has its utility and counter-utility, depending on the project.

In addition, the latest iteration of Unreal Engine (Unreal Engine 4) includes a physics engine, graphics engine, sound engine, input/gameplay framework, and online module.

C

C# is an object-oriented programming language that combines the powerful logic of C++ with the intuitive programming of Visual Basic. C# is based on C++ (which itself has its roots in C) and follows some of the same rules as in Java, which we’ll get into later.

The most prominent C# features are:

Garbage collection: management of the release and allocation of memory in application development. Memory can now be reclaimed from unreachable and unused objects.

Lambda expressions: creating anonymous functions, which in turn support functional programming techniques.

Exception handling: a structured way of handling unexpected situations when a program runs, providing an extensive approach to error detection and error handling.

Language Integrated Query or LINQ: powerful, declarative query syntax that allows filtering, grouping, and ordering data source operations with minimal code.

Finally, C# also supports Versioning. Versioning makes sure that development frameworks, programs, and libraries are allowed to evolve over time with full compatibility in mind.

C# VS F

F# is an open-source, strongly typed, general-purpose programming language for creating clear and robust code with emphasis on performance and speed. Compared to C#, F# features async programming, doesn’t require declarations, and includes lightweight syntax for a more compact code.

But that’s in theory.

In practice, it’s a little bit different. Here’s how F# performs against C#:

.NET libraries interaction: most .NET libraries are created in C#. Often, they are easier to use in C# rather than in F#.

Covariance/Contravariance: supported in C#, not supported by F# as of yet. CO/Contravariance refers to enabling implicit array conversion of a more derived type to a less derived type.

Implicit casting: F# does not support implicit casts, while C# does. Therefore, libraries that rely on implicit casts are easier to use in C#.

Task runtime performance: async tasks run faster in C# than in F#. This boils down to the fact that the C# compiler supports asynchronous code natively and generates optimized code.

Immutability: In F#, immutability is used by default (unless you deliberately use the ‘mutable’ keyword). In object-oriented programming, Immutability refers to an immutable object—an object whose state cannot be changed after creation. Immutability is better for potential parallelization, simpler to understand, and considered more secure.

Type inference: In F#, this refers to fewer annotations, making refactoring simpler.

Simplicity of use: F# is easier and simpler, period. In F#, there are currently 71 keywords. C# contains more than 110 keywords. In addition, C# has 4 ways to define a record, while F# has only one.

Expressions make debugging easier and code simpler. C# features both statements and expressions, while in F#, there are only expressions.

In conclusion, both languages have their strengths and cons, so the unceremonious answer to the question “Which one is better between C# and F#” would be — it depends on the engagement you’re working on and the type of system you are striving to build.

With that thoroughly combed through, let’s consider some popular C# frameworks.

.NET

.NET is a completely free, open-source developer platform that supports writing a wide range of applications in several languages: C#, F#, Visual Basic, and Visual C++.

Practically speaking, .NET allows you to write and build programs using C# – a powerful combo akin to peanut butter and jelly, but I digress.

Heads up: sometimes you can encounter the term ‘net framework’ in the wild. .NET and .NET Framework are two different things – well, kind of.

According to the official page, .NET is the entire development environment, while .NET Framework refers to the original version of .NET. Additionally, the .NET Standard is a formal specification of the APIs common across different .NET versions. This ensures that the same .NET code can execute across different .NET implementations.

When you decide to use .NET, what you’re really doing is downloading a bundle of programs, editors, and other utilities that do the following:

Translate the C# code into computer-readable instructions

Define data types for storing and retrieving information in your applications, including strings, numbers, and dates

Provide additional software-building utilities, like tools for writing the program output on your screen

The newest implementation of .NET is .NET Core.

.NET Core

As I stated above, .NET Core is the latest version of the .NET development platform. The original version (NET Framework) is used to write Windows (desktop) and server-based applications. The newest version (.NET Core) is used to write server applications compatible with Windows, Linux, and macOS. However, .NET Core does not support writing desktop applications.

Regarding applicability, .NET Core is best utilized when you work on cross-platform projects that need to be compatible with multiple operating systems such as Windows, Linux, and Mac.

Additionally, .NET Core is a good choice for working with a server-oriented architecture called microservices.

Microservices represent an architectural style that builds one application using multiple small services, where each service runs its own process. Examples include Jersey, Spring Boot, and Dropwizard.

Lastly, .NET Core is excellent for working with Docker containers. In fact, Microservices and Containers are often used together to improve efficiency and scalability. Docker containers are lightweight software packages that allow for seamless integration across multiple computing environments in a completely standardized manner.

ASP.NET

ASP.NET is an open-source web development framework for writing web applications on the .NET framework. It succeeds its predecessor, ASP (Active Server Pages), as a more reliable, more flexible, and more powerful framework that offers security and speed all at once.

Essentially, ASP.NET is an extension of the .NET platform and is designed specifically for developing websites, web applications, and other web-based programs.

In a nutshell, here’s how the different components of ASP.NET work:

Language: in ASP.NET, you can write your projects in either C#, F#, or Visual Basic.

Libraries: ASP.NET includes all base .NET libraries, as well as some additional libraries for common web solutions, such as MVC (Model View Controller). The Model View Controller pattern provides functionality for building your app into three layers: the display layer, the business layer, and the input control.

Common Language Runtime: CLR or the Common Language Runtime is the place where all code for your .NET applications is executed. In addition, the CLR features a very useful tool for web developers called Razor. Razor is a programming syntax used to develop dynamic apps with C# or Visual Basic in the ASP.NET framework.

Unity (Game Engine)

Unity is a powerful cross-platform game engine and an IDE for game development, providing tools such as physics, 3D rendering, collision detection, and more. IDE stands for Integrated Development Environment, similar to a runtime environment but designed for creating games.

In fact, rather than hard-coding a game from scratch, you can use Unity to craft a game with the help of its many features – neatly tucked in a single place. Some of these features include folder and file navigation, a timeline tool for producing and editing animations, and a powerful visual editor with drag-and-drop functionalities and almost limitless possibilities for producing a full-fledged game.

Unity is written in C# and C++ (with some exceptions), and it supports C# as the main scripting language for game mechanics for both 2D and 3D games.

Go (Golang)

Go (Golang) is an open-source, statically typed, and high-performing programming language developed by Google and released in 2012.

It’s one of the simplest programming languages, featuring an easy learning curve and almost nonexistent barrier to entry for beginner programmers. In fact, some have anecdotally claimed that total beginners can build an application in GO in just a few hours – albeit with proper guidance.

What’s up with the name, though? Well, the original name of the language is Go, while Golang comes (incorrectly) from the now-defunct domain name golang.org (redirects to go.dev). In a nutshell, Go and Golang are one and the same.

What about features?

Go supports concurrency. In programming linguistics, concurrency refers to running two or more processes in an ‘interweaving’ way via context switching on shared resources. In concurrent programming, processes complete in an overlapping fashion on a single CPU or core.

Additionally, Go supports a powerful library and toolset that eliminates the need for third-party packages altogether. These are Gofmt (formatting), Gorun (adds a so-called ‘bang line’ for further Python cross-compatibility), Goget(GitHub library downloader), and Godoc (code parsing).

In Go, there is no virtual machine. The code directly compiles to machine code, which allows for a more efficient compilation time and faster execution.

Finally, the official website features a playground area where you can go (heh) and unleash your imagination in multiple creative ways.

Database Management

Simply speaking, database management means working with data, and usually a significant amount of data. Anything from optimizing databases to migrating large chunks of data across multiple servers falls within the realm of data administration duties.

To that end, efficient data management simply cannot be done without the proper knowledge of programming languages, of which there are quite a few to pick from.

Some of these include:

SQL

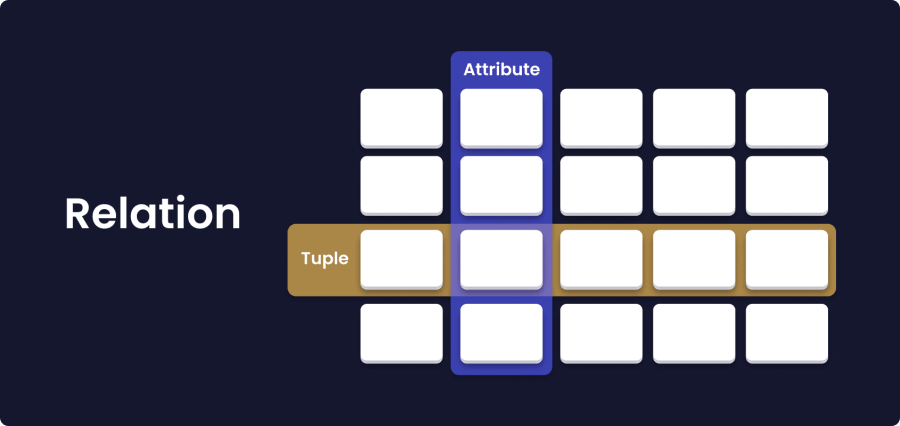

SQL, or Structured Query Language, is a type of language for working with and manipulating databases and data. Currently, it’s the standard language for processing data in so-called ‘Relational Database Management Systems’ (RDBMS). Some of these include Oracle, Access, Ingres, and Microsoft SQL Server, among others.

MySQL

MySQL is a relational database management system that uses SQL to process data.

What is a database?

A database is a structured set of data. For example, a shopping list is a collection of data, and so is a collection of the multiple passwords that you’re using to access your accounts (which are hopefully encrypted, but that’s a different story altogether).

In particular, the relational database model stores data in columns and rows, with relationships between elements following strict logic. So, we could say, an RDBMS is the set of tools and software used to store, manipulate, query, and retrieve data from a database.

Some of the key features of MySQL include:

Compatibility: often associated with web apps and services, MySQL was designed with the ‘ultimate’ compatibility in mind. In fact, the RDBMS runs on all major platforms: Win, Mac, and Unix-based OSs (like Linux) as well.

Open-source model: individuals or organizations can freely use (and contribute to) MySQL non-commercially under the GPL license. For commercial use, users can purchase a commercial license from Oracle.

Ease of use: MySQL uses a tabular paradigm that is easy to grasp and very intuitive to use. The MySQL ecosystem features a comprehensive set of tools for end-to-end software development, including data analysis and server management.

PostgreSQL

PostgreSQL is an open-source, relational database management system. It supports both relational (SQL) and non-relational (JSON) queries. The main difference between SQL and JSON is that SQL is used to specify the operations you want to perform on data. On the other hand, JSON is only used to define the characteristics of the data (quantity, quality) instead.

The general use cases for PostgreSQL can be found anywhere where there is – you guessed it – data. Here are some examples.

LAPP stack: the Linux, Apache, PostgreSQL, and PHP (Python, Perl) development environment is the perfect use case for PostgreSQL. Here, PostgreSQL ‘fulfills’ the role of a robust database management system that backs up many complex web applications and websites.

Geospatial database: together with the PostGIS extension, PostgreSQL supports geospatial database systems (GIS).

Wide support: PostgreSQL supports all major programming languages, including JavaScript (Node.js), Python, C++, C#, Perl, Go, and more.

MSSQL (Microsoft SQL Server)

MSSQL, as the name suggests, is a relational database management system (RDBMS) developed and supported by Microsoft. MSSQL supports a wide array of applications set in analytics, business management, and transaction processing.

MSSQL is built on top of the general SQL language and uses a row-based table model that collects data elements across different tables—thereby avoiding data redundancy by storing data in multiple places within a single database.

The main underlying component of Microsoft SQL Server is the SQL Server Database Engine. This engine handles data storage, data processing, and security.

Further, the engine also operates on relational logic and is responsible for managing tables, pages, files, indexes, and data transactions. Additional procedures like triggers and views are also executed by the SQL Server Engine.

MongoDB

MongoDB is a document-oriented, cross-platform database that uses collections and documents instead of rows and columns, as in the relational database model. MongoDB also uses key-value pairs for JSON-like documents with optional database schemas. A schema is the blueprint for the database's structure.

In MongoDB, databases contain collections of documents. Each of these documents is different from the others, featuring a varying number of fields, different sizes, and different content (although not necessarily so).

The structure of the documents aligns more closely with what developers call ‘objects’ and ‘classes’ in their respective programming languages. In this model, rows and columns have a defined structure featuring key-value pairs.

Finally, MongoDB environments are easily scalable. In fact, MongoDB allows you to work with millions of documents across hundreds of clusters in a hierarchical structure of your choosing.

Firebase Realtime Database

Firebase Realtime Database is a NoSQL cloud database that syncs data across all clients in real-time, with the data being available even after your application goes offline.

FRD stores data in the cloud as JSON and synchronizes it in real-time with all clients. For example, if you’re building an application using JavaScript, Apple and Android SDKs, all of these clients share one Realtime Database that gets automatically updated with the newest batch of data.

Key features include:

Real-time updating: updates data within milliseconds instead of working with HTTP requests. Projects can be done faster and more efficiently without worrying about networking code.

Offline availability: the Firebase Realtime Database SDK (Software Development Kit) persists your data to disk. This allows all of your Firebase applications to remain responsive offline. Whenever the connection is reestablished, the client device syncs with the current server state and updates all changes on the spot.

Scalability: your application data can be supported at scale. Meaning, you can split your data across multiple Firebase Realtime Database instances to get the most out of your paid plan. Currently, the free plan doesn’t support database operations at scale.

Ruby

Ruby is a dynamic, open-source, object-oriented scripting programming language with a main emphasis on simplicity and productivity. It features one of the most intuitive syntaxes a developer could ask for, like so:

Code: puts “Hello World!”This would be the entire code to execute the famous ‘Hello World!’ string, period. It’s as simple as that!

Ruby leverages the power of object-oriented programming alongside the procedural nature of scripting languages to produce a product that’s both handy and practical.

In fact, the main ideas behind Ruby’s inception were illustrated through Ruby’s creator, Yukihiro Matsumoto, who wanted to bridge the best concepts from his five favorite languages: Smalltalk, Perl, Ada, Eiffel, and Lisp. Thus, in 1990, Ruby was finally conceptualized and released to the general public.

In Ruby, everything is considered an object. In programming lingo, properties are known as instance variables, while actions are referred to as methods. Unlike in other languages, numbers and other primitive types are also treated as objects.

Ruby treats method closures as blocks. By attaching a closure to a method, developers can describe how that method should work.

Finally, Ruby doesn’t use variable declarations. Instead, it uses simple syntax conventions to state the type of variables:

var - local variable

@var - instance variable

$var - global variable

Here are some popular Ruby backend frameworks.

RoR (Ruby on Rails)

Ruby on Rails (also known as RoR) is an open-source framework for the web. It’s created with Ruby, and it’s best suited for developing web applications—regardless of complexity. In other words, Rails allows you to create websites.

Here are some functionalities:

Active record: work with data in databases.

Routing: the built-in routing mechanism in Rails maps URLs to concrete actions.

MVC: RoR uses MVC, the Model-View-Controller architecture. The model is responsible for handling data logic, the view part displays the information to the end user, and the controller part of the MVC architecture controls and updates the flow of data between the view and the model.

Shopify (e-Commerce platform)

Shopify is a web-based eCommerce platform that allows sellers to set up online stores and sell their products online. Shopify is built on Ruby on Rails, and, like the simplicity of RoR, it offers a convenient way to run an online business without opening a brick-and-mortar store (though you can do that as well with their Shopify POS offering).

So, how did an e-commerce platform find its way onto a list of programming languages and why?

As platforms grow, the complexity of their architecture expands until it becomes necessary to constantly maintain, update, and further develop them to meet the ever-growing demands of the online market.

With time, there comes a need for talent specializing in exactly this – developing and maintaining Shopify and Shopify-based online stores. In a way, the platform becomes its own developing environment and the talent behind it becomes ‘designated platform’ developers – Shopify developers.

Java

Java is a class-based object-oriented programming language developed and released by Sun Microsystems in 1995. It’s designed to have as few implementation dependencies as possible.

Java also works under the so-called WORA paradigm (write once, run anywhere), meaning that developers are able to write the code once and run it anywhere later. All platforms that support Java can run the code without recompilation.

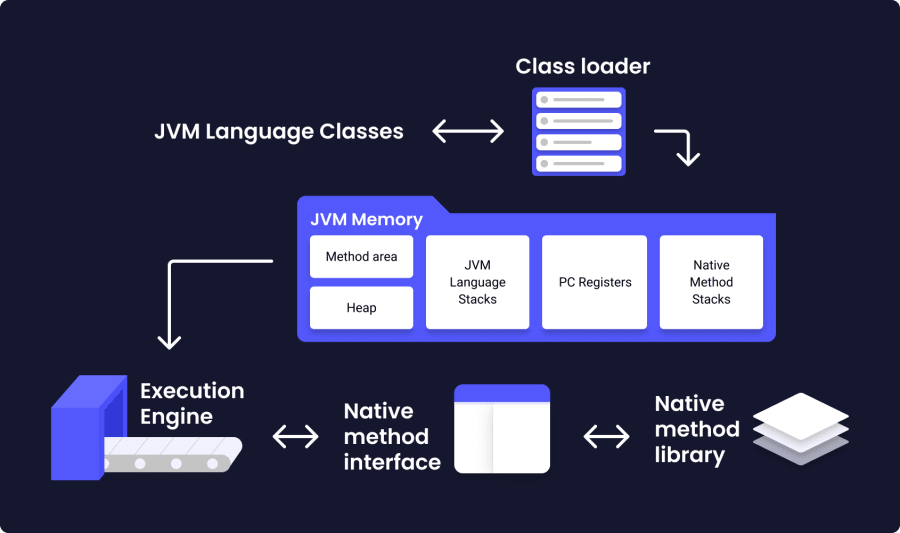

One important feature of Java is the Java Virtual Machine (JVM). A JVM is a virtual machine that allows computers to run Java programs (and programs written in other languages) using Java bytecode. In turn, Java bytecode is the set of instructions executed by the Java Virtual Machine, with functionality similar to that of an assembler in C or C++.

Some of the most prominent Java features are:

Popularity: I’ve been caught saying this a lot, but Java is also one of the more popular programming languages, ‘snatching’ an impressive ~15% out of the popularity pie according to the TIOBE index.

Platform-independence: programs written in Java on one machine are compatible to run on another machine.

Accessibility: Java is easy to learn, but hard to master (as is the case with most coding languages out there).

Below, you can find some of the more popular Java backend frameworks.

Spring & Spring Boot

Spring is a powerful open-source Java framework that enables developers to build comprehensive, reliable, and scalable Jakarta EE (formerly Java EE) applications. Jakarta EE is a commercial platform that provides a set of tools for developing Java business-oriented applications.

One simple example of Spring's applicability comes from one of its modules, Spring JDBC. By using the JDBC Template from the Spring JDBC module, developers can build applications faster and with significantly fewer lines of code.

Spring Boot is an extension of the Spring framework. With Spring Boot, producing an application is super quick and doesn’t require the addition of ‘boilerplate’ code. In programming, boilerplate colloquially refers to redundant code. To that end, Spring Boot allows programmers to build applications that, as Forrest Gump would paraphrase, just run.

Scala

Scala is a multi-paradigm, statically typed programming language that combines features of both object-oriented (values as objects) and functional (functions as values) programming. It derives its name from ‘Scalable Language’, while its source code is compiled and executed using JVM, or a Java Virtual Machine.

Here’s how it works:

Type inference: Instead of denoting the types of your variables, the powerful type inference within the Scala compiler will figure them out in your stead.

Traits and classes: Scala allows you to combine multiple traits into a single class.

Concurrency and distribution: In Scala, data can be processed asynchronously using futures and promises. While you run asynchronous work, the main program thread is ‘free’ to do other computations at the same time.

Apache Spark

Apache Spark is an open-source engine for processing large-scale data operations, executing data engineering tasks, and training machine learning algorithms on single-node clusters or machines. It’s currently hosted and maintained at the Apache Software Foundation.

Apache Spark is written in Scala, Python, Java, and R. The benefits of using this data analytics engine are multiple:

APIs: With Spark, developers can easily implement Apache Spark’s APIs for working with large datasets. These datasets include over 100 operators suitable for structured data.

Speed: created with performance in mind, Spark can be up to 100 times faster than its main competitor, Hadoop, while Scala is generally considered to be faster than — let’s say Python, for example. In fact, Apache Spark currently holds the world record for large-scale data-on-disk sorting.

Unified engine: Through the utilization of its multiple standard, higher-level libraries, Spark supports a wide range of SQL queries, machine learning and data processing operations as well. Developers can use these libraries to increase productivity, improve performance, and create complex workflows – unhindered by common large-scale data operation ‘hiccups’ like lag, downsampling, or framework integration problems – to name a few.

DevOps & Cloud Software

The term DevOps stems from the combination of two other words: namely, DEVelopment and OPerationS. In broader terms, DevOps is meant to illustrate a collaborative approach to solving problems, mainly in the world of application development and related IT fields.

More precisely, DevOps can be defined as a philosophy of work that aims to bridge gaps in communication, task delegation, and solution adoption between two or more teams – or even within a single team.

In and of itself, DevOps is not considered a technology. Instead, environments that run DevOps methodologies aim to implement optimal automation, adopt iterative software development, and deploy programmable infrastructure approaches into their day-to-day processes across all tech branches within a given company.

On top of that, DevOps also covers all team-building activities and strives to foster cohesion among developers, system administrators, and IT project managers.

Other areas DevOps tends to optimize can include tools, services, job responsibilities, and best practices – among other ‘highlights’ in software development.

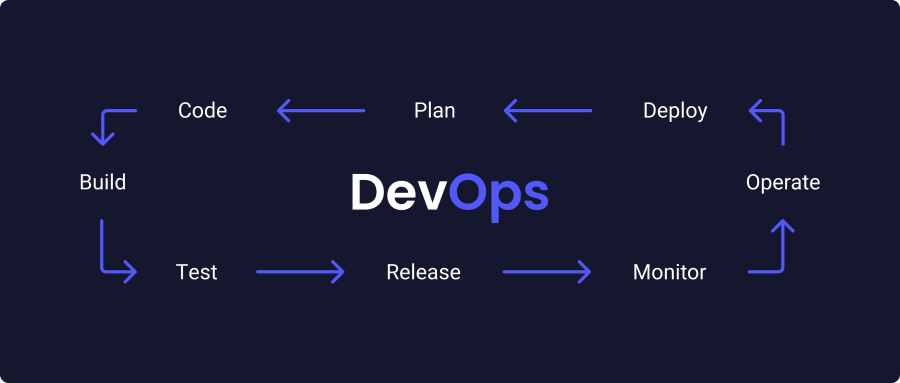

DevOps can also be visualized as an infinite loop:

The loop begins at Plan, then Code, Build, Test, goes through Release, Deploy, Operate, and Monitor, and finally ends with Plan, which starts the loop all over again. It’s a simple but powerful methodology that, in a perfect world, aims to produce the perfect digital product with the most intuitive UI/UX, solving a major problem for users worldwide.

Other common methodologies that stem from the DevOps approach are:

Continuous Delivery, Continuous Integration, or Continuous Deployment – the CI/CD processes within DevOps enable development teams to deliver code updates more frequently and to improve their digital product as reliably and efficiently as possible. It’s an agile methodology that continuously shifts the software development team’s focus toward quality and meeting business requirements.

DevOps adoption: this covers practices like real-time monitoring, collaboration management, and incident management, with equal emphasis on all three. Real-time monitoring is an important part of ensuring that all processes (within a given system) run smoothly and that, when incidents occur, they are accounted for and addressed to restore normal operations as quickly as the optimal solution allows. Collaboration management and incident management are ‘deployed’ immediately after a ‘hiccup’ in normal operations and therefore serve as additional tools to real-time monitoring by enabling problem-solving right there and then.

Cloud computing: delivering any kind of computer service over the internet is generally considered part of cloud computing. Things like servers, databases, analytics, and other software solutions all fall under this umbrella, offering faster innovation, smart resource allocation, and working with large amounts of data. Generally speaking, cloud computing means that you ‘rent out’ servers (computer infrastructure) from some data center that will meet the computational needs of your business at scale.

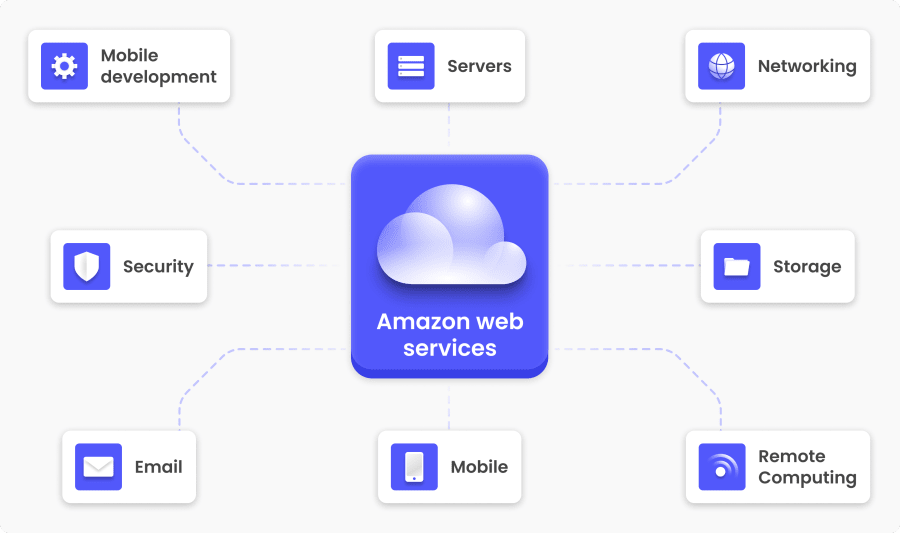

AWS (Amazon Web Services)

Amazon Web Services, or simply AWS, is a comprehensive cloud computing platform that offers three main services (among 200 others):

Model | Description | Key benefit |

|---|---|---|

IaaS | Infrastructure as a Service | Virtualizes physical hardware (servers/networking) |

PaaS | Platform as a Service | Provides environments for building and shipping apps |

SaaS | Software as a Service | Web-based apps accessed via browser (no installation) |

AWS was first envisioned as an in-house computing infrastructure built to handle Amazon’s own online retail needs.

Today, AWS is one of the largest and most complex cloud platforms in the world, supporting multiple innovative technologies, including machine learning, artificial intelligence, data analytics, the Internet of Things, and more. Most services within AWS are available for small businesses, large enterprises, and government agencies with data centers supporting up to 190 countries worldwide.

Another subset of AWS services includes:

Storage database

Data management

Networking

Security

Analytics

Mobile development

Governance

Virtual reality

Microsoft Azure

Microsoft Azure (formerly known as Windows Azure) is the second-largest cloud computing platform in the world, and one of the fastest-growing at that. Azure currently operates in more than 200 data centers around the world, with additional plans to get 12 more, all the way up to 54, in the near future. Microsoft Azure is free to start, and then you have the option to only pay for the services that you are using as you go.

In a similar manner to AWS, Microsoft Azure offers 200 different services divided into 18 categories, some of which include:

Compute Services

Storage

Networking

Mobile Development

IoT

AI (Artificial Intelligence)

Security

DevOps

Media Identity

Web Services

Under computing, Azure offers the following services:

Virtual machine: users are able to create virtual machines in Windows, Linux, and other popular operating systems in mere seconds.

Service fabric: developing microservices becomes simpler and less expensive. As I mentioned above, microservices are an architectural style that groups multiple smaller services into a single main service, or a ‘bundle’ of multiple smaller applications.

Cloud service: offers building applications on the cloud. Once the application goes live, Azure manages balance, health monitoring, and provisioning.

Google Cloud Platform

Google Cloud Platform (or GCP) is a public cloud computing platform that allows customers to use their services either free or to choose a pay-per-use model instead. The resources for these services are hosted in multiple Google data centers around the world and offer a wide array of functionalities, including data management, AI tools, machine learning, web and video services, and plenty more.

What is the difference between Google Cloud and Google Cloud Platform? In short, Google Cloud is a bundle of services that can help companies digitalize sooner rather than later. On the other hand, Google Cloud Platform provides public cloud services for hosting web-based applications. GCP is considered to be part of Google Cloud.

Besides GCP, Google Cloud also offers:

Google Workspace (formerly G Suite): includes Gmail, organizational management applications, and other tools.

Android and Chrome OS Enterprise: unique Chrome OS and Android versions that allow users to connect their devices with other web applications.

APIs (Application Programming Interfaces): mainly targeted toward AI, machine learning, and other enterprise mapping solutions. APIs provide a ‘neat’ way for different applications to communicate with each other.

Compared to AWS and Azure, GCP offers fewer services with a cloud model primarily geared toward software developers. However, GCP also features comprehensive documentation that guides users through each step of implementing the service for their personal or business needs.

Docker

Docker is a set of PaaS (Platform as a Service) tools and services that offers building, running, monitoring and delivering software in smaller packages called ‘containers’ on the cloud.

In the olden days, running a web application required setting up a server, installing Linux, and hoping that your application wouldn’t get too much traction too soon. In that case, you’d have to do some load balancing and include a second server to prevent your app from crashing under too much traffic (if only).

Nowadays, web-based applications rarely rely on a single server. Rather, most popular web applications are hosted on multiple systems in an environment commonly referred to as ‘the cloud’. In fact, thanks to multiple innovations involving Linux cgroups and kernel namespaces, nowadays servers essentially ‘evolved’ from hardware-based technologies into software.

These software-based servers are known as containers, and they represent a combination of the Linux OS they’re installed on and a runtime environment that accounts for the contents of the container.

Essentially, containers are a combination of three main categories:

Builder: a tool or tools used to build the container, some of which include Dockerfile (Docker) and Distrobuilder (LXC).

Engine: the application that runs the container. In Docker, this is called the ‘docker’ command and the Docker daemon — also known as ‘dockerd’. The ‘docker daemon’ is the runtime environment that manages application containers.

Orchestration: the technology behind managing multiple containers, including OKD and Kubernetes.

Terraform

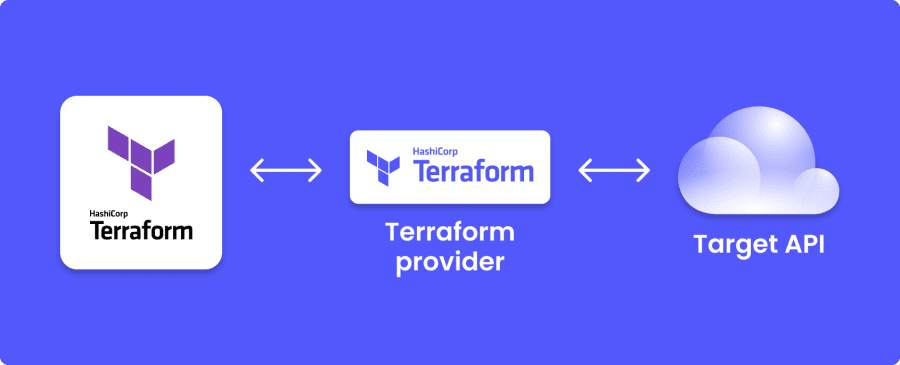

Terraform (also known as HashiCorp Terraform) is an open-source IaC (Infrastructure as Code) tool that gives you complete control over managing, reusing and sharing both on-premise and cloud computing resources human-accessible configuration files.

On-premises deployment means keeping your IT resources within your company’s internal IT infrastructure. In a cloud hosting model, all resources are kept on the cloud.

Terraform works through its application programming interfaces (APIs). With these APIs, Terraform can create, manage, and operate IT resources directly in the cloud and connect to multiple cloud providers simultaneously.

In essence, Terraform can work with any platform as long as there is a designated API to connect the Terraform services with the online platform with the use of a provider.

What is a provider?

Providers are cloud computing platforms like AWS, Azure, GCP, Kubernetes (I’ll get to this one in a minute), GitHub, and more. Currently, Terraform can connect to at least 1700 providers to manage thousands of resources, applications, and other services in the cloud. This list continues to grow.

On a general level, Terraform works in three main stages:

Write: the step where developers define resources, which can be found anywhere on the cloud. One example of this is using a VPC (Virtual Private Cloud) for deploying applications on a virtual machine. You can also add a load balancer and security groups.

Plan: the part where Terraform creates a thorough plan to update, renew, or ‘destroy’ parts or the entire system based on any existing infrastructure, including your previous configuration.

Apply: when approved, Terraform proceeds with the plan by performing each operation in the correct order while respecting any available resources and resource dependencies. Continuing with the example, if you decide to update your VPC but change the number of virtual machines in that virtual private cloud, Terraform will build the VPC from scratch before changing the virtual machines.

Ansible

Ansible is an open-source tool that provides software/process automation solutions for developers, system administrators, and architects. With Ansible, IT professionals can automate all kinds of IT processes, including configuration management, intra-service orchestration, application deployment, and more. Ansible doesn’t require additional infrastructure, so it can be deployed simply and easily.

Generally speaking, Ansible connects to the services, tools or programs that you want to be automated and pushes those programs to execute instructions that would have been otherwise done manually.

Ansible does this by using so-called Ansible Modules, which then execute over standard SSH (a standard for secure authentication, connection, and encrypted file transfer). When the automation process is complete, Ansible finally removes these modules if and when applicable.

Granted, the phrase Ansible Module sounds complex, but keep in mind that all the work is done by Ansible itself and not by the user. In fact, the Ansible Module is designed to model the preferred state of a given system, which means that each module decides what should be done on any node at any time.

In Ansible, there are control nodes and managed nodes. A control node refers to the computer that runs Ansible. A managed node is the device controlled by Ansible, also known as the control node. Ansible operates by connecting these nodes to a network and then sending an Ansible module to that connected node.

In addition to the aforementioned SSH standard, modules and nodes can also operate using a different authentication mechanism.

Kubernetes

Kubernetes (also known as ‘k8s’) is an open-source platform that provides automation solutions for deploying, maintaining, and scaling Linux containers. Broadly speaking, Kubernetes allows you to automate most of the processes involved in running groups of clusters that, in turn, run Linux containers.

As you can see, even the definition itself is more complex than it needs to be, and this is where Kubernetes ‘slides in’ to save developers the trouble of navigating through all of that complexity by using automation.

What are Kubernetes clusters?

Grouping together hosts that run multiple Linux containers into clusters can be referred to as Kubernetes clusters. These clusters work on all three cloud-based computing models: public, private, and hybrid clouds.

I briefly touched on the mechanics behind containers, but what are they actually used for?

If your problem requires a solution that involves working with containers at scale, then inevitably you’ll end up needing a container orchestration tool somewhere down the line.

Lately, web developers and system admins are deploying more containers because of several reasons: firstly, workload portability and deployment speed are an absolute must if you want to get some semblance of following any DevOps working protocols.

Secondly, containers also simplify resource provisioning to IT administrators who constantly scramble for time, and that’s putting it lightly.

Thirdly and finally, containers simplify the process of developing applications in the cloud, improving speed and enabling a hands-on, agile approach to development across all three cloud computing models (public, private, hybrid).

Moreover, there’s a reason why Kubernetes is being referred to as an orchestration tool. That reason lies in the fact that container orchestration tools (Kubernetes being one of them) can be thought of as conductors conducting an entire musical orchestra.

For example, an orchestra conductor would designate how many musicians would play the violin in the group, who plays the first violin, and how loud or quiet to play the instruments during a performance.

Similarly, a container orchestrator would specify how many web server frontend containers are required, their functionality, and how to allocate resources among them.

If needed, there are multiple ways to connect a frontend with a backend using Kubernetes services (it usually requires some combination of one or multiple Kubernetes clusters, service objects, and deployments). Once everything is connected and working as intended, you are free to delete the services to clean up.

Note: Kubernetes was first developed by Google and is today maintained by the Cloud Native Computing Foundation (CNCF).

PHP

PHP is an open-source backend (server-side) scripting language primarily suitable for developing static or dynamic websites and web applications. PHP used to stand for Personal Home Pages, but today the same abbreviation refers to Hypertext Processor.

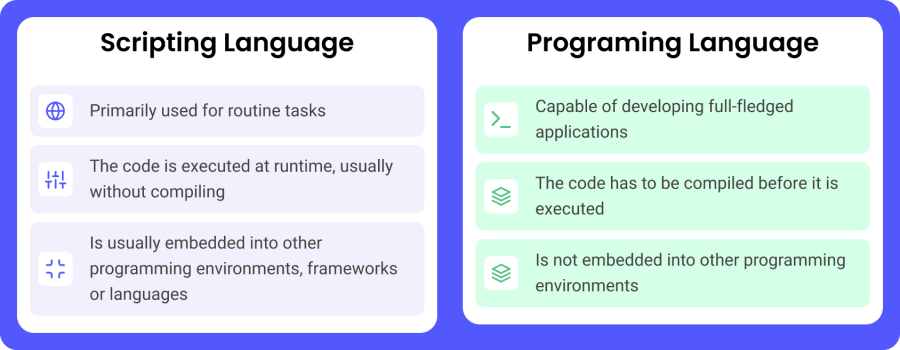

What is the difference between a scripting language and a programming language?

Some PHP highlights include:

Open-source: anyone who wants to contribute to the further development of PHP can do just that. This is one of the reasons PHP’s frameworks (Laravel, which we’ll also get into) are so popular among web developers.

Cross-platform: PHP runs on every popular platform, including Windows, Linux, and Mac.

Low barrier to entry: PHP features an intuitive syntax that is easy to pick up, even for absolute programming beginners (some coding knowledge would help, though).

Database-friendly: PHP syncs easily with all relational and non-relational databases (MongoDB, MySQL, PostgreSQL) across all platforms.

Needless to say, PHP is a very powerful language. For example, a PHP file can contain both HTML tags and frontend (client-side) scripts – JavaScript being one of them.

In terms of popularity, PHP powers some of the most visited websites across categories such as eCommerce (Magento, PrestaShop), CMS (WordPress, Joomla), social networks (Facebook, Digg), and more.

Finally, there is one more thing to address, and it has something to do with the popular, brazen notion that something is experiencing a steep decline after years of ‘usurping’ the spotlight. It’s about the question “Is PHP dying?”, and the answer is: a resounding no. Today, at least 80% of websites use PHP in one way or another, so that should answer that.

PHP also has its fair share of frameworks to choose from:

Symfony

Symfony is a PHP framework that includes a suite of components aimed at simplifying repetitive coding and giving you full control over your web projects and code. It uses a similar programming method to Ruby on Rails, which makes your code cleaner and easier to read.

Additionally, Symfony offers the use of various plugins, an admin generator interface, and so - called AJAX helpers as well.

In Symfony, AJAX helpers circumvent writing JavaScript to change elements on a page by using a PHP script executed on the server instead. Simply speaking, this type of server-side interaction (Ajax) is the backbone of highly interactive web applications.

Thirdly, Symfony uses the MVC (model-view-controller) pattern, making it easier to test, debug, and customize your code. Gone are the days of writing never-ending XML configuration files, replaced by applicative logic that helps to build robust applications and ‘frees up’ your time to focus on the more creative aspects of the problem-solving parts in your coding projects.

In terms of technological benefits, Symfony is popular because of its:

Flexibility: Symfony is adaptable, configurable, and fully independent. In fact, it can be thought of as a PHP framework with 3 main functionalities:

Fullstack: to produce full-fledged applications from scratch

Brick-by-brick model: enables developers to create applications the way they see fit

Microframework: allows for redeveloping the application without having to reinstall the entire framework

Speed: performance optimization can be a very challenging concept to master. In other words, it’s hard to design for speed once everything in the application falls into place on all levels. With that in mind, Symfony is one of the fastest PHP frameworks to date.

Stability: the Symfony release process is designed to preserve full compatibility between versions each time a new minor Symfony version is released (every six months) and to offer three-year support for major Symfony versions (every two years).

Laravel

Laravel is an open-source PHP framework that features a set of tools and other resources to build fast, modern, and reliable PHP applications. It has seen a surge in popularity in recent years, mainly due to its very potent ecosystem and the ever-growing additions of robust extensions, programming resources, and other compatible Laravel packages.

Just like Symfony, Laravel operates on the model-view-controller (MVC) architecture. By leveraging the Bladetemplating engine, Laravel can break HTML into pieces and manage them through the controller component of the MVC model. Read more about blade templates

Other tools contained within the Laravel framework include:

Eloquent: Object Relational Mapper (ORM) is meant for working with databases and large amounts of data.

Artisan: a command-line tool for building new models, controllers, and other software components, which provides a significantly faster way of building applications than hard-coding everything from the ground up.

IoC container: the Laravel IoC (Inversion of Control) container is a robust tool for working with class dependencies. For example, dependency injection is a method of removing hard-coded dependencies. The dependencies are instead injected during the runtime, which enables greater flexibility and easier dependency management, including dependency swapping.

Query builder: The Laravel database query builder is an interface for creating, running, and managing database queries. It can be leveraged for most database operations and works with all database systems supported by Laravel.

Unit-testing: the tests directory in each Laravel application should contain two directories: unit and feature. Unit tests are a type of test that focuses on a small portion – or in most cases – on a single method of the code. In fact, tests contained within the unit directory don’t boot the Laravel application and also don’t have access to the application’s database or other parts of the framework.

In turn, feature tests can test larger portions of the code, including object interactions, HTTP-to-JSON endpoint requests, and more. The Laravel documentation recommends running mostly feature tests, as they provide the most assurance that your configuration functions as intended.

Drupal

Drupal is an open-source content management system (CMS) used by millions of webmasters worldwide. It’s similar to WordPress in that, well, both are content management systems. In terms of popularity, however, WordPress takes the upper hand. But then again, WordPress is the most popular CMS – powering a staggering 43% of all websites around the globe!

Now back to Drupal.

Drupal is written in PHP, and it’s also free to download, install, and use. In fact, anyone with proper coding knowledge can contribute to the platform, while all the other contributors (the community) will keep a ‘watchful eye’ over the platform’s underlying code. This ensures that safety, security, and compliance are all accounted for within the Drupal ecosystem.

Additionally, Drupal makes publishing content very easy. It offers a reliable and robust interface with highly customizable forms to edit text, images, and other media. On top of that, Drupal also features a sophisticated user-role system for seamless role integration across the board. As a webmaster, you’ll be able to assign, restrict, and additionally configure member roles to ensure that everyone has the proper access to everything else without sacrificing security.

The main Drupal package is called Drupal Core, while all the other additions and tools are referred to as Drupal modules. Combining these two, you will be able to build a full-blown modern website in a way that doesn’t require ‘busting out’ the entire PHP (including HTML5 + CSS3) paradigm from scratch.

Finally, Drupal follows all modern object-oriented programming best practices, including YAML and HTML5 standards. YAML is a human-readable data serialization standard often used to write configuration files. HTML5 (HyperText Markup Language) is the backbone of the entire Internet, but we’ll get more into HTML and CSS later.

WordPress

WordPress is an open-source content management system (CMS) for creating websites, blogs, and commercial pages. As I stated above, it’s the world’s most popular CMS by far – and with good reason too.

WordPress is intuitive, fast, robust, reliable, and easy to use. Its sheer popularity leads webmasters to use WordPress more, which in turn increases its popularity even further.

WordPress is also very welcoming to people from non-coding backgrounds, which adds another reason for the CMS's upward traction over the years since its creation.

The WordPress core is written in PHP, while some of the benefits of using WordPress over other content management systems include:

Simplicity: WordPress is very simple and easy to use. Again, this is one of the main reasons WP has been able to outpace competitors like Joomla and Drupal over the years. In fact, you don’t need any coding background to start creating websites with WordPress.

Flexibility: WordPress is highly adaptable and suitable for everyone’s needs. Need some SEO boost for your site? Try one of the dozens of WordPress SEO plugins (Yoast SEO, All In One SEO Pack) to meet your goals head-on.

Responsiveness: WordPress, WordPress plugins, and WordPress themes (working together) can deliver a ‘remarkable’ experience for users across all devices, including desktop computers, tablets, and smartphones.

Speed: In the ideal setting and using the ideal WordPress tools, you will be able to create a WordPress website in a single day. You’ll need copyright-free images, content, and maybe some additional media, such as videos, charts, or interactive graphics. If you have all that, WordPress makes it easy to upload and arrange everything, and does so very, very fast. Note: it goes without saying, but avoid uploading large files and try to optimize your website for speed – it’s what Google recommends.

Scalability: as your website grows, the requirements to ‘keep it afloat’ will constantly change. You’ll find yourself in need of faster servers, more server space, reliable (99.9%-100%) uptime, and the option to address both horizontal and vertical scalability. WordPress websites are 100% scalable, but not all WordPress websites will be ready to handle a potential surge in incoming traffic without a significant risk of degraded performance during those peak hours.

APIs: WordPress supports thousands of APIs that allow the integration of 3rd party web services into your website. This makes it easier for all of those applications to communicate with each other and provide a seamless experience for the end user.

WooCommerce

WooCommerce is an open-source eCommerce plugin for WordPress. WooCommerce provides full online store functionality on your website, which you can add either from the WordPress dashboard or directly from the WordPress Plugin repository.

The main benefits of using WooCommerce include:

Modular framework: despite being a WordPress plugin, WooCommerce is itself a rich environment with tons of plugins and extensions that add an extra ‘flair’ to the online shopping experience. Today, WooCommerce powers around 99% of all WordPress online stores.

Easy integration: one notable implication is that WooCommerce is not, and cannot be, as powerful as a full, purpose-built ecommerce platform. However, the fact that WooCommerce integrates with WordPress comes across as a benefit of WooCommerce.

In that vein, the combined power of these two increasingly popular platforms (including the extensive, ever-growing ecosystem of WordPress and WooCommerce plugins) removes all major constraints that online retailers face and allows you to become more creative across the board.

Whether you’re building your online store from scratch or trying to migrate your existing retail experience to WordPress, the relatively easy WooCommerce installation process can help you achieve both.

The general idea behind running any business is to scale. WooCommerce is very scalable, as it supports stores of all shapes and sizes, including small businesses with the potential to grow and large enterprises with already existing serious online demands.

Another huge benefit is that WooCommerce is fast. The powerful combination of WP and WooCommerce provides a responsive, fast, and reliable shopping experience for all stores, regardless of size.

The WooCommerce plugin also features powerful built-in analytics that help you understand your customers, their shopping habits, and how they interact with your store. In addition, you can also integrate WooCommerce with Google Analytics for a more thorough data analytics approach.

Magento (Adobe Commerce)

Adobe Commerce (formerly Magento) is an eCommerce platform that allows you to build and manage online stores. It’s mainly written in PHP, and it leverages parts of the MVC (model-view-controller) architecture to give both B2B and B2C users complete creative control over their stores.

In addition, Adobe Commerce features a variety of tools and resources. Some of those include search engine optimization, marketing, and product management tools.

In terms of scalability, many store owners will have to switch to a different platform as their business grows. With Adobe Commerce, this isn’t the case anymore. Adobe Commerce supports scaling your business regardless of how small or how big it is – and the same applies for the size of your product inventory as well.

Finally, Adobe Commerce can be considered one of the most intuitive eCommerce platforms to use right now. It features a simple but powerful drag-and-drop system that anyone can use, regardless of previous coding experience or the need for additional developer support.

PrestaShop

PrestaShop is a powerful open-source eCommerce platform that makes it very easy to create, manage, and run an online store. It’s built with PHP and provides merchants with everything they need to create a full eCommerce experience for selling their products and services.

Some of the benefits include:

Open-source & free: PrestaShop is a 100% free and open-source platform with all the tools to build a fully functional e-shop in hours, if not minutes. The community is also always there to help.

Payment gateways: PrestaShop supports multiple payment gateways, including Amazon Pay, PayPal, First Data, Wordplay, and more. It also features more than 250 payment providers that can be used as add-ons.

Powerful marketing: It offers a range of tools to help your website trend online. Some of the more popular ones include tools for free shipping, email marketing, special offers, coupon codes, affiliate marketing, and many, many more.

PrestaShop currently powers around 270K stores, and that number steadily continues to grow.

Yii

Yii is a component-based PHP framework suitable for developing web applications quickly and efficiently. The abbreviation Yii stands for “Yes It Is!”. Understanding Yii requires some object-oriented programming knowledge since Yii functions mostly as an OOP framework.

Yii can be used to create any kind of web application, such as forums, CMS, eCommerce platforms, RESTful web services, applications that handle high volumes of traffic, and much more.

Yii2

Yii 2.0 is the second version of Yii, featuring several important updates to improve workflow and optimization. Some of these upgrades include: script management and assets, CSRF security tokens, multi-tier caching support, query builders, RBAC, validators, namespaces, Gii support, i18n support, and more.

Zend

Zend is an open-source PHP framework featuring a collection of PHP packages that work around the MVC design pattern. It mainly operates around three main models, like so:

Composer: managing package dependency

PHPUnit: package testing

Travis CI: continuous integration service

Currently, Zend can be used to create all kinds of web applications, services, and other web technologies.

Python

Python is an open-source, general-purpose, object-oriented, high level programming language that utilizes powerful features such as dynamic binding, dynamic typing, modules, data types and classes to create both simple and complex applications or connect two or more components together by leveraging its robust scripting capabilities.

Some of the more prominent features of Python include:

Intuitive coding: Python is a high-level language capable of performing complex operations to put together all kinds of applications for desktop, web – and with the help of additional frameworks—for mobile as well. Despite all that, Python is very easy to learn. Compared to other prominent languages (C, C++), anyone will be able to pick up the basics of Python in a couple of days. Mastering concepts like modules and advanced packages will understandably take longer.

Easily-readable syntax: Python features a considerably simpler syntax that doesn’t use semicolons or brackets. The code block is defined by using indentations instead. You can easily recognize what the application is supposed to do with a simple glance over the code.

Extensive standard library: the standard Python library offers powerful solutions for anyone to use. Coding blocks for challenges that involve working with databases, expressions, unit-testing, and image manipulation have already been included in the standard library and don’t need to be written from scratch.

Portability: the same Python code can be used on different operating systems and machines. If you write Python code on a machine that uses Windows, you can use the same code on a Mac without introducing any changes to the code.

Expressiveness: Python can solve complex problems using only a few lines of code. For example, a ‘Hello World’ program in Python (there are currently two major versions of Python: Python 2 and Python 3; Python 3 is considered to be the more semantically correct version) would look like this: print("Hello World!").

Python doesn’t have a shortage of backend frameworks, libraries, and tools.