João M.

Deep Learning Research Engineer

He specializes in building advanced models, including large language models (LLMs), capable of code refactoring, bug detection, and continual learning. João works extensively with PyTorch and deploys models on cloud platforms and high-performance computing systems.

Prior to ASML, he led research teams at GAIPS Lab, published in leading AI conferences, and secured competitive grants from the U.S. Air Force and FCT. He also taught AI courses, earning a Teaching Excellence Award for his contributions to education.

João’s key projects include advancing continual learning techniques, enabling AI to acquire new knowledge without forgetting previous tasks, and applying reinforcement learning to train models more efficiently with less data. He is passionate about making AI systems more effective, practical, and continually improving.

Main expertise

Experience4

Deep Learning Research Engineer

- Led a research team on the "LLMs for Software Engineering" project, focusing on technical debt reduction, bug detection, and documentation analysis using Large Language Models.

- Designed, implemented, trained, tested, and deployed LLMs for automatic code refactoring and bug detection.

- Deployed models to cloud production environments and HPC distributed computing clusters.

- Monitored the continual performance of deployed models using tools such as MLFlow, Sacred, and Weights & Biases.

- Connected the company’s research department with academic partners at TU/e.

Deep Learning Research Engineer

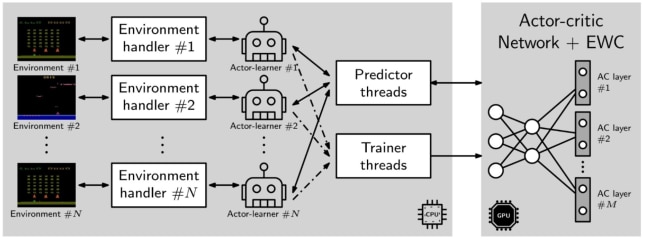

- Designed, implemented, trained, tested, and deployed state-of-the-art deep learning architectures, including Actor-Critics, DQNs, and LLMs, using convolutional, recurrent, and attention-based mechanisms for feature extraction across a wide range of tasks.

- Deployed models to cloud production environments on platforms such as Google Cloud, Amazon AWS, and Slurm HPC distributed computing clusters.

- Monitored the continual performance of deployed models using tools like MLFlow, Sacred, and Weights & Biases.

- Assembled the company’s HPC Slurm cluster.

- Led five research teams as first author, publishing a research paper for each in top-tier AI venues, including AAAI, IJCAI, ECAI, the Artificial Intelligence Journal, and PLoS One Journal.

- Presented AI research at top-tier international conferences such as AAAI, IJCAI, and ECAI.

- Secured two competitive funding grants, one from the U.S. Air Force Office of Scientific Research and another from the Portuguese Foundation for Science and Technology (FCT).

- Received the Best Paper award for the project “Helping People On The Fly: Ad Hoc Teamwork for Human-Robot Teams.”

Software Engineer

- Reduced technical debt and increased overall test coverage of the Top Sky Tower solution, a tool for air traffic controllers to manage electronic strips.

- Implemented and tested critical security detection systems.

Software Engineer

- Trained a Convolutional Neural Network to classify valid identity card images.

- Implemented software for automatic and periodic backups of the university’s records to the AWS cloud.

- Re-implemented legacy software using modern technologies such as Scala and Kotlin.

Assessments

Engineering excellence

João’s overall performance in a 90-minute live technical assessment ranks in the top 5% of vetted Deep Learning Research Engineers at Proxify.

Portfolio

Highlighted by João

1

1This project investigates two hypothesis regarding the use of deep reinforcement learning in multiple tasks. The first hypothesis is driven by the question of whether a deep reinforcement learning algorithm, trained on two similar tasks, is able to outperform two single-task, individually trained algorithms, by more efficiently learning a new, similar task, that none of the three algorithms has encountered before. The second hypothesis is driven by the question of whether the same multi-task deep RL algorithm, trained on two similar tasks and augmented with elastic weight consolidation (EWC), is able to retain similar performance on the new task, as a similar algorithm without EWC, whilst being able to overcome catastrophic forgetting in the two previous tasks. We show that a multi-task Asynchronous Advantage Actor-Critic (GA3C) algorithm, trained on Space Invaders and Demon Attack, is in fact able to outperform two single-tasks GA3C versions, trained individually for each single-task, when evaluated on a new, third task—namely, Phoenix.

We also show that, when training two trained multi-task GA3C algorithms on the third task, if one is augmented with EWC, it is not only able to achieve similar performance on the new task, but also capable of overcoming a substantial amount of catastrophic forgetting on the two previous tasks.

Other projects 3

Education

Stop browsing.

Get matched faster.

Talk to an expert and get tailored matches from our network in just 2 days.

A network of over 6,000+ tech experts

Get matched with perfect-fit talent in 2 days on average

Hire quickly and easily with 94% match success